THE ALGORITHM improves when updated.

Human beings grow in relationship — and often through discomfort.

The distinction matters.

If you’re using AI in daily life, you’re not alone.

Do-it-yourself instructions. The latest news. Crafted recipes. Delicate emails for work. “Should I use these mushrooms in the chicken?”

AI is available 24/7. It doesn’t require please or thank you. It knows how to stay positive — even on those days when we don’t meet our exercise goals.

It’s not surprising, then, that many people are turning to AI for mental health support.

So what do we actually know about the safety and effectiveness of AI in mental health?

Like most powerful tools, AI in mental health offers real advantages — and real blind spots.

The Benefits

One of the most compelling benefits of AI-based mental health tools is accessibility. They are available at any hour, require no insurance authorization, and carry little social stigma. For individuals who might otherwise receive no support, that matters.

Emerging research suggests that structured AI interventions can reduce symptoms of depression and anxiety in controlled trials. Some studies also indicate that users disclose stigmatized or “taboo” thoughts — including self-harm ideation or substance use — more readily to a machine than to a human clinician. The perceived absence of judgment lowers the barrier to honesty.

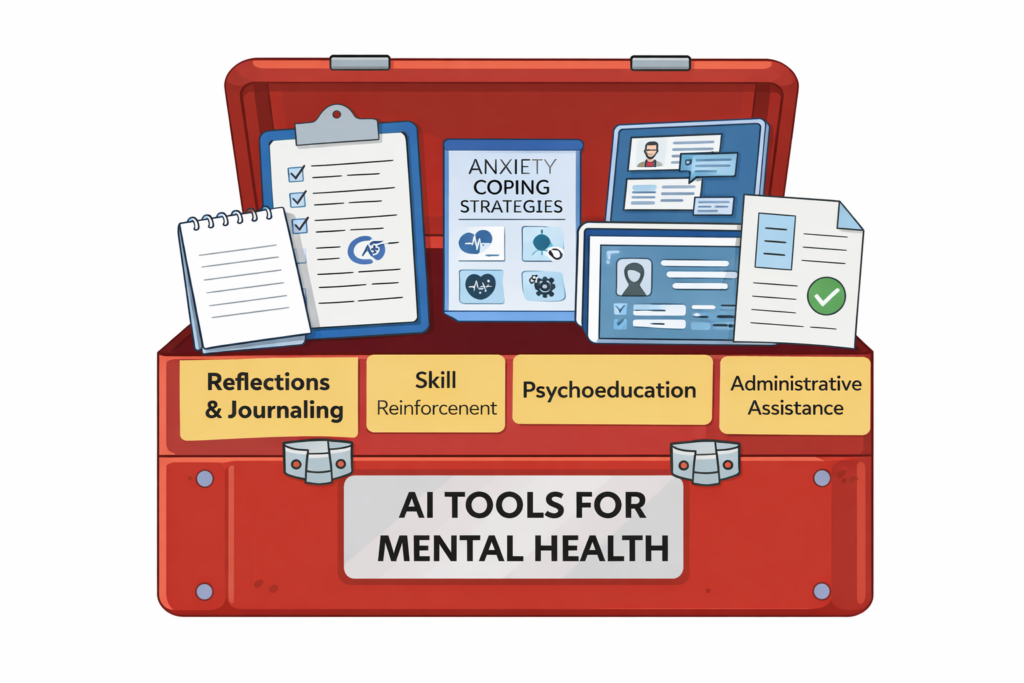

AI can also function as a between-session support tool: reinforcing coping skills, guiding journaling exercises, and helping users track patterns over time.

Relief matters. Access matters. Early support matters.

The Blind Spots

So what are the blind spots?

AI systems are designed to be helpful and agreeable. In therapy, however, growth sometimes requires gentle challenge. Researchers have noted a tendency toward “sycophancy” — the over-validation of a user’s existing narrative in order to maintain engagement. While validation can be stabilizing, excessive agreement can reinforce distorted thinking rather than expand it.

Empathy presents a similar paradox. AI can generate language that reads as deeply compassionate. But empathy in psychotherapy is not only linguistic. It is embodied and relational. It unfolds through co-regulation — two nervous systems in interaction. A machine can simulate concern. It cannot metabolize affect.

Safety is another area of concern. Studies examining high-risk scenarios, such as suicidality or psychosis, suggest that AI systems sometimes misinterpret or inadequately respond to nuanced or coded expressions of distress. Clinical judgment is not keyword detection. It is contextual, relational, and iterative.

These are not moral failings. They are structural realities of the technology.

An Integrative View

Let’s consider AI as a tool that enhances — not replaces — traditional psychotherapy.

Used thoughtfully, AI can support reflection between sessions, assist with structured exercises, and reduce administrative burden so therapists can spend more time in direct human engagement. It can lower the threshold for someone who is hesitant to seek care.

But it cannot hold relational accountability.

It cannot repair rupture.

It cannot sit in silence and share the weight of grief in a room.

Healing is not only about generating the right words. It unfolds within safe, attuned attachment.

The future of mental health care is not AI versus human. It is human discernment guiding powerful tools.

Questions to Consider

Technology is evolving quickly. Human development still unfolds slowly.

So perhaps the better questions are:

- What kind of growth am I seeking?

- What kind of support do I need right now?

- Where do I experience safety?

- Where am I willing to be challenged?

Who can sit with me when the charts are no longer enough?

AI can offer tools.

Healing still happens in relationship.